I’ve been building product teams for years now, and I’m watching something shift in real time. Every founder I talk to is anxious about the same thing: if AI can code, what happens to our programmers? And honestly, if you’re a programmer yourself, you probably lie awake thinking about it too.

Let me be direct. The anxiety is real, but the conclusion is wrong.

Your programmers don’t become less valuable. They become different kinds of valuable. And that’s actually good news, if you understand what’s changing.

The Real Threat Isn’t AI. It’s Staying in the Same Role.

Here’s what’s happening.

AI coding agents such as Claude, ChatGPT, GitHub Copilot, and everything coming next will get extremely good at one specific thing, which is turning a detailed specification into working code. They’ll do it faster, cheaper, and with fewer bugs than humans in many cases.

That part is not debatable. It’s happening.

But here’s what people miss: the spec came from somewhere. Someone decided what to build. Someone understood the real problem. Someone weighed the trade-offs. Someone said “this is the right way to solve this.” That person made a judgment call.

AI can’t do that. Not yet. Maybe not ever in the way that matters.

The programmers who will be fine, and who will actually become more valuable, are the ones who stop thinking of themselves as coders and start thinking of themselves as problem-solvers. And I know that sounds like corporate speak, but it’s not. Let me explain what I mean by breaking it down into three phases.

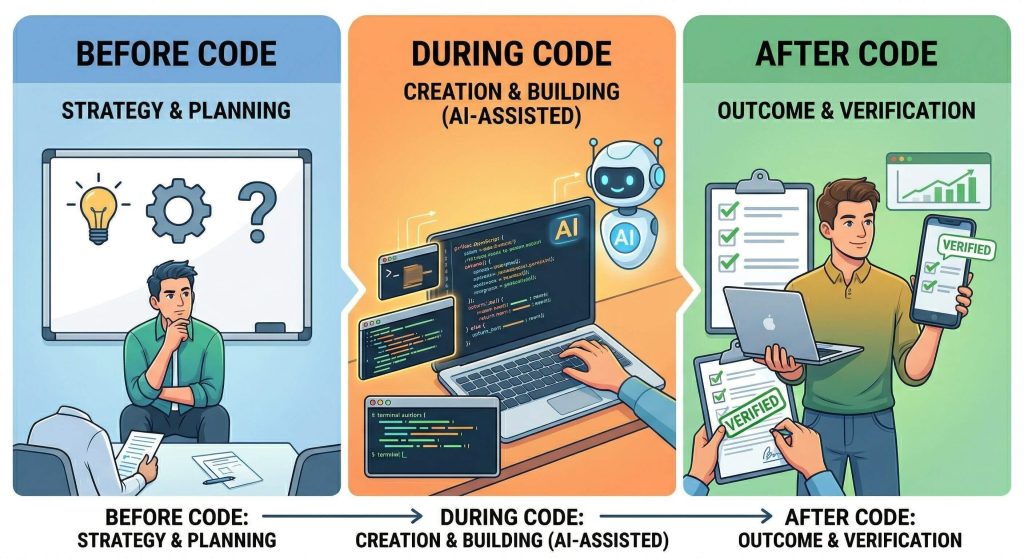

Before Code, During Code, After Code

I’ve started thinking about product development in three distinct phases, and this is the framework that’s changed how I think about programmer value.

Before Code

Before Code is where you figure out what to build. This is research, questioning, exploring options, designing the architecture, writing the specification, understanding the real problem underneath the stated request. This is where a programmer who thinks like a strategist shines.

They ask “why are we building this?” They challenge assumptions. They surface risks. They say “we could solve this three different ways, here’s what each costs us.” They write a spec so clear that execution becomes almost mechanical.

During Code

During Code is execution. Writing the code, testing it, debugging it, making it work. This is where AI agents are getting dangerously good. If your programmer’s value is purely in this phase, they’re in trouble. This is the replaceable part.

After Code

After Code is verification and ownership. Does this actually solve the problem? Is it good enough? What needs to change? Does it work in the real world? Who owns it if something breaks? This is where your programmer becomes the quality gate, the person accountable for outcomes, not just features.

Here’s the shift: your programmer should own the before and after. During is where AI helps.

What Your Programmer Should Actually Be Doing

Let me paint a picture of what I’m seeing in my own teams when this works well.

A growth lead comes to a programmer and says: “We need a feature that lets users export their data in CSV format.” That’s the request.

- The programmer who operates in the old model says: “Okay, I’ll build that.” And they go write code.

- The programmer who’s adapted to the new world says: “Let me understand this better.” And they start asking questions.

They ask:

- Why does the user need to export?

- What are they doing with the data after they export it?

- How many rows are we talking about?

- Are there performance implications?

- Which formats do users actually need?

- What happens if the export fails halfway through?

- Do we need to notify them?

- How do we handle really large datasets?

Then they disappear for a day and come back with a two-page spec. It includes the actual workflow, the data models involved, the edge cases, the performance constraints, and three different approaches to solving this problem.

They’ve ranked each approach: this one ships fastest but we pay for it later in maintenance; this one takes twice as long but scales perfectly; this one is clean but depends on a third-party tool we’re not sure about.

They’ve done the thinking so thoroughly that the actual coding, including building it, testing it, and shipping it, becomes almost routine. And importantly, they’ve already thought through what could go wrong, so they catch issues before they become problems.

Then they ship it. Then they check in. Is it actually solving the problem? Are users happy? What’s the feedback? What would they do differently next time?

That person is irreplaceable. AI isn’t replacing them. AI is helping them do their job better and faster.

The Three Zones

Let me be really specific about where the value is now:

1. High Risk Zone (Replaceable soon)

Writing boilerplate code, routine feature implementation, following a detailed spec line by line, testing for obvious bugs, code review as pattern matching, basic documentation. This is where AI is strong and getting stronger. If this is where you spend your time, you should be worried.

2. Medium Risk Zone (Uncertain)

Being a senior engineer who mentors others, code architecture decisions, performance optimization, debugging complex systems. AI is starting to help here, but it’s not yet replacing the judgment of someone who truly understands your codebase and your team. The risk is real, but there’s still time to shift.

3. Low Risk Zone (Safe)

Deciding what to build, understanding the real user problem, designing systems that scale, writing specs that prevent mistakes, defining quality standards, owning outcomes, bridging the gap between what the business needs and what’s technically possible. This is where you become indispensable.

AI tools will help you be faster at this work, but they won’t replace your judgment.

The game is about which zone you operate in.

What This Means for Your Team?

If I’m a founder or team lead reading this, here’s what I’ve started doing differently:

I’m asking my programmers to spend more time in research mode and less time in coding mode. Before they write a line of code, I want them to have investigated the problem. I want specs that are actually thoughtful. I want them to challenge my assumptions. I want them to push back when they think we’re solving the wrong problem.

I’m also asking them to own outcomes, not just features. When something ships, I want them to care about whether it actually works in the real world, not just whether it compiled and passed tests.

And I’m being honest about what’s happening. I’m saying:

“AI is going to make certain parts of your job obsolete. The code-writing part. That’s not your value anymore, because that’s a tool. Your value is your judgment. Your ability to think clearly about problems. Your willingness to question assumptions. Your ownership of whether something actually works.”

Some of my team members got it immediately. Some resisted. Some are still figuring it out. That’s normal. This is a real shift, and it takes time to reorient yourself.

For Programmers: The Path Forward

If you’re a programmer and you’re worried about this, here’s what I’d do if I were you:

- Start shifting your identity. You’re not a coder. You’re a problem-solver who happens to know how to code. You’re an engineer, not a typist. Your value isn’t in how fast you can write code, but it’s in how clearly you can think about problems and how well you can make decisions.

- Spend time understanding the business. Why does your company exist? What problems do customers have? What are they willing to pay for? How do you make money? This isn’t “soft skills” stuff. This is the new hard skill. The programmer who understands business context is vastly more valuable than the programmer who just writes code.

- Get comfortable with specs and design. Learn to write clear specifications. Learn to design systems before you build them. Learn to think about trade-offs. This is where you create value now.

- Ask more questions. When someone asks you to build something, the first word out of your mouth shouldn’t be “yes.” It should be “why?” Why are we building this? Why now? What happens if we don’t? What would success look like? Are there other ways to solve this? These questions add more value than code ever will.

- Own your outcomes. When you ship something, you own whether it works. Not the product manager. Not the designer. You. That’s a different mindset, but it’s the mindset that makes you valuable.

The Bottom Line

Programmers aren’t becoming less important in an AI world. They’re becoming differently important. The ones who understand this and shift their role accordingly will be fine. More than fine, because they’ll be more valuable than they’ve ever been.

The ones who stay in the “I write code” mindset and try to compete with AI on speed will struggle. That’s not a criticism. That’s just math. AI beats humans on raw coding speed. It always will.

But judgment? Understanding problems? Making decisions about what to build? Owning outcomes? Those are still fundamentally human capabilities. Those are where the real value is.

So here’s my advice, whether you’re building a team or you’re a programmer trying to stay relevant:

Stop thinking about code. Start thinking about problems. Stop being faster. Start being smarter. Stop executing specs. Start writing them.

That’s the shift. And it’s actually more interesting work than what you were doing before.